LiDAR Matrix Sensor Gives Robots a 3D View of the World – But Half the Data Gets Lost

Breaking: New LiDAR Matrix Sensor Enables 3D Mapping for Small Robots – With Caveats

A new LiDAR matrix sensor, essentially a grid of 64 time-of-flight ranging sensors, is giving small robots the ability to see in 3D. Mellow_Labs, the creator who tested it on a robot named Zippy, reports the sensor can build a 2D depth map from 2 cm to 3.5 meters. However, the first prototype reveals a major limitation: half of the sensor's rows point at the floor, wasting about 50% of its data.

"This sensor is incredibly exciting for autonomous navigation," said Mellow, the developer behind the project. "It’s like having 64 eyes instead of one – but we’re still learning how to use all of them effectively." The sensor is designed to help robots avoid obstacles and see what's ahead, a crucial step toward fully autonomous operation.

Background: What Is a LiDAR Matrix Sensor?

Unlike a standard single-point time-of-flight (ToF) sensor that measures distance to one spot, a LiDAR matrix sensor combines 64 ToF elements in a grid. This allows it to capture a 2D array of distances, effectively creating a low-resolution depth map of the scene in front of the robot. The sensor's short range (up to 3.5 meters) is well-suited for indoor robotics.

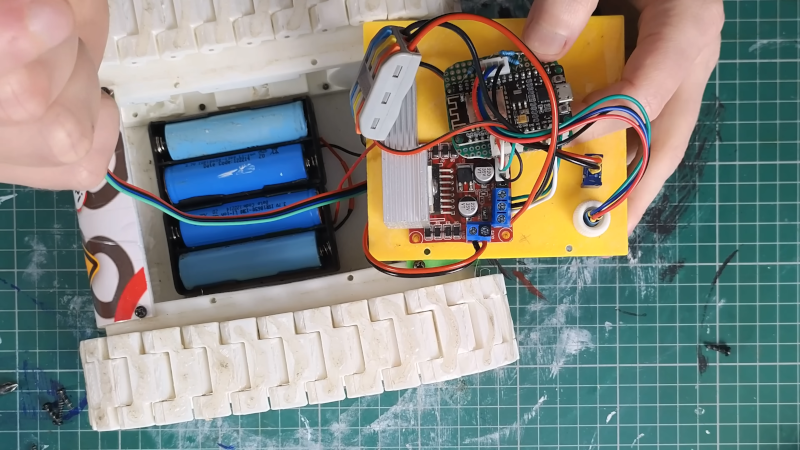

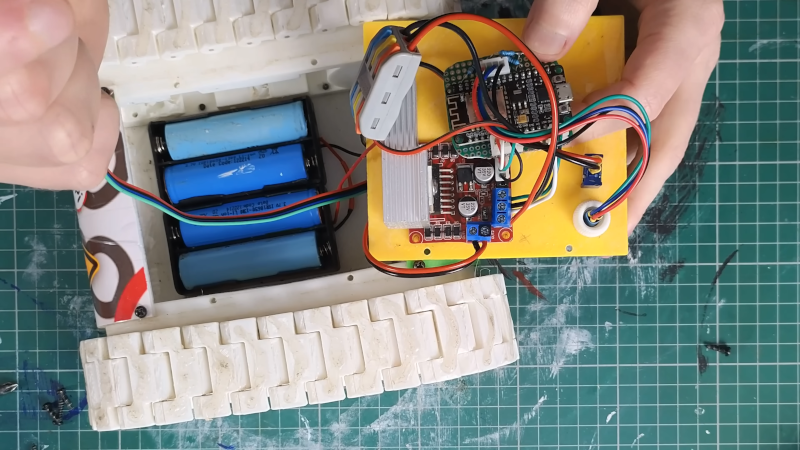

Mellow integrated the sensor into Zippy, a 3D‑printed tank‑style robot powered by an ESP32 microcontroller. Without the sensor, Zippy required manual control; with it, the robot could theoretically drive autonomously by sensing the environment. The project was documented in a video that shows early test runs.

Challenges: Code from AI and Data Loss

To speed up development, Mellow used an LLM (large language model) to write most of the software. "Iterations were required to get the code working," he explained. The decimation of data needed for the LLM‑generated code further reduced the effective resolution – on top of the already wasted half of the sensor rows.

Despite these hurdles, the system functioned. The robot could detect the floor in front of it – a useful feature for avoiding drop‑offs or approaching objects. Mellow considers the project a proof‑of‑concept for affordable 3D sensing on small robots.

What This Means for Robotics

This sensor demonstrates that even budget‑oriented hardware can bring 3D perception to hobbyist robots. While a 50% data loss is significant, future versions could adjust the sensor mounting angle to capture more useful scene information. The use of LLMs for code generation also points to a trend where AI assists in robotics development – for better or worse.

For those interested in trying the technology, Mellow has shared the code. The project also serves as a reminder: hardware limitations can be mitigated by clever software and iterative testing, but the physical placement of sensors matters enormously.

Next Steps: Improving the Mount and the Code

Mellow intends to redesign Zippy's sensor mount to angle more rows forward, potentially recovering much of the wasted data. He also plans to refine the LLM‑written code to reduce decimation. "This is just the start – the matrix sensor has huge potential," he noted.

Read more about time‑of‑flight sensors or directly access the project code to experiment yourself.

Related Articles

- Pixel 11 Rumors and Fitbit Air: 6 Key Takeaways from Pixelated Podcast Episode 99

- How ByteDance's Astra Dual-Model Architecture is Revolutionizing Robot Navigation

- Unlocking the Hidden Potentials of Your Samsung TV: A Step-by-Step Guide to the Secret Service Menu

- Q4 2025 Cybersecurity Report: Industrial Automation Systems Face Rising Email-Borne Worms Amidst Overall Threat Decline

- Global Law Enforcement Shuts Down Four IoT Botnets Behind Record DDoS Attacks

- 10 Key Insights into NVIDIA and ServiceNow's Autonomous AI Agent Collaboration

- 7 Key Ways NVIDIA and ServiceNow Are Revolutionizing Enterprise AI with Autonomous Agents

- Fortifying Your AI Coding Workflow Against Supply-Chain Attacks: A Step-by-Step Guide