How to Convert an Autoregressive Language Model into a Discrete Diffusion Model: A Step-by-Step Guide Using Zyphra's Approach

Introduction

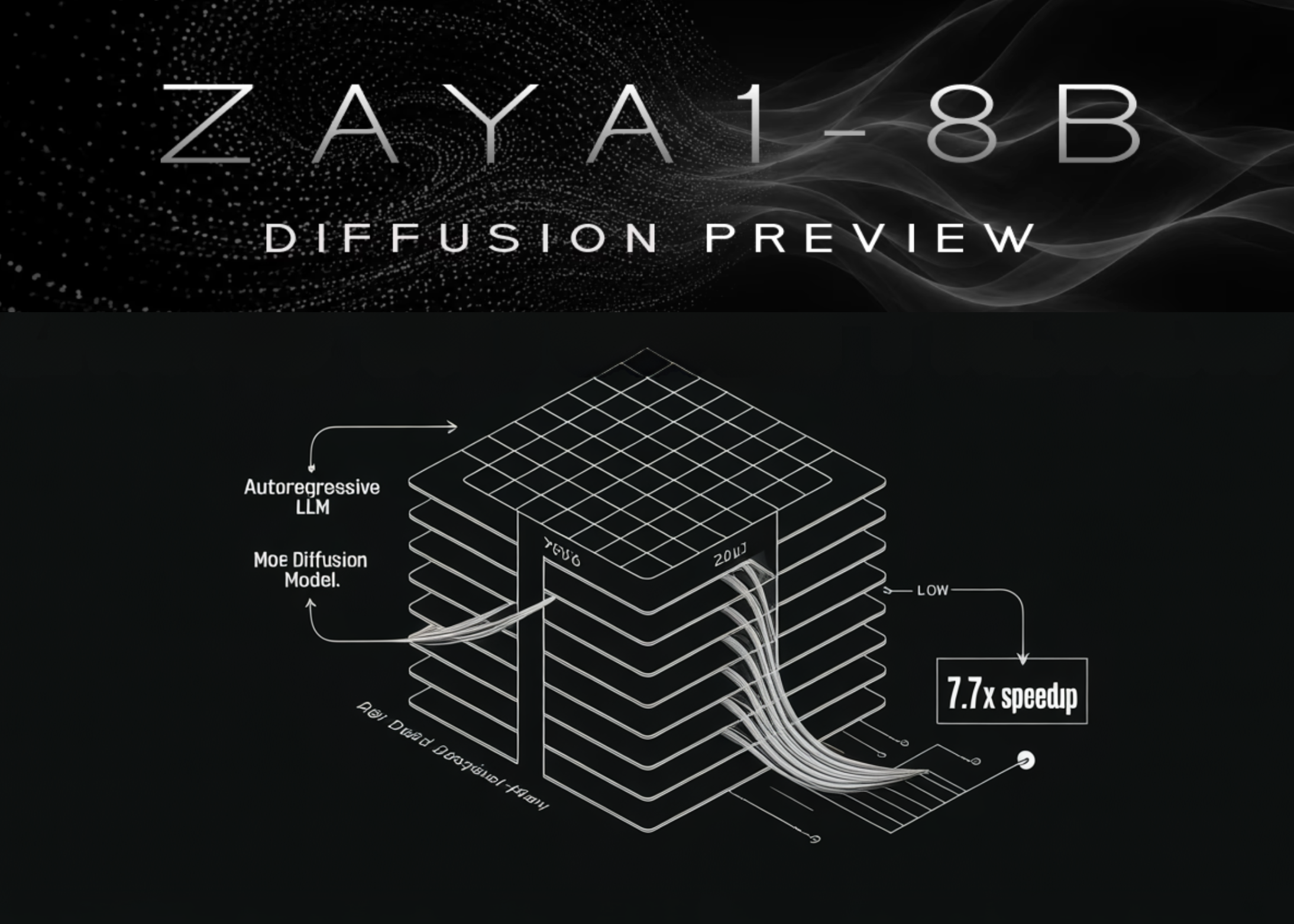

Training a discrete diffusion language model from scratch is notoriously difficult, with few established recipes. However, Zyphra recently demonstrated a practical alternative: converting an existing autoregressive LLM into a diffusion model. Their ZAYA1-8B-Diffusion-Preview achieves up to 7.7x speedup on AMD hardware without sacrificing evaluation performance. This guide walks you through the key steps they followed, based on the TiDAR conversion recipe. While specifics (token counts, context lengths) reflect Zyphra's implementation, the overall workflow is reproducible for other pretrained models.

What You Need

- Base pretrained autoregressive LLM (e.g., ZAYA1-8B-base or a comparable model with a similar architecture)

- TiDAR conversion recipe – the methodology for turning an autoregressive model into a discrete diffusion model

- High-performance GPU cluster – preferably with AMD hardware (MI250 or similar) to maximize the inference speedups

- Massive text dataset for mid-training – at least 600 billion tokens

- Long-context corpus for context extension – additional 500 billion tokens (used at 128k sequence length)

- Supervised fine-tuning (SFT) dataset – instruction/response pairs tailored for diffusion

- Familiarity with transformer model training pipelines (Hugging Face Transformers, PyTorch, etc.)

Step-by-Step Guide

Step 1: Understand the Bottleneck

Before converting, internalize why diffusion helps. Autoregressive decoding loads a unique KV-cache for each token in the sequence, becoming memory-bandwidth bound. Diffusion generates a block of N tokens simultaneously, sharing a single KV-cache across the batch – turning the operation compute-bound. This insight is foundational; it justifies the extra conversion effort.

Step 2: Select Your Base Model

Choose a pretrained autoregressive LLM that already performs well on your target tasks. Zyphra used ZAYA1-8B-base. The model should have been trained with a standard causal language modeling objective. Conversion is most impactful for models that are inference-heavy but training-light, as diffusion’s memory advantage only appears at inference.

Step 3: Adopt the TiDAR Recipe

Zyphra built on the TiDAR (Token-wise Diffusion with Autoregressive Retrospective) approach. This recipe defines how to initialize a diffusion model from an autoregressive checkpoint – essentially remapping the original weights to work with a masking objective. You will likely need to modify your model’s forward pass to support parallel token prediction from masked inputs. Implement the “single-step transformation from mask to token” as Zyphra did: the model directly predicts the unmasked token in one shot.

Step 4: Continue Pre-Training in Diffusion Mode

With the architecture converted, run diffusion-conversion mid-training. Zyphra trained for an additional 600 billion tokens at a context length of 32k. The training objective shifts from next-token prediction to masked token prediction. This phase solidifies the new inference behavior. Ensure your dataset is diverse and covers many domains to avoid catastrophic forgetting.

Step 5: Extend the Context Length

After the initial conversion mid-training, perform native context extension. Zyphra boosted the context window from 32k to 128k using 500 billion additional tokens. This step is crucial for applications requiring long-form generation (documents, code, conversations). Use the same diffusion training objective during extension; the model learns to handle longer sequences efficiently.

Step 6: Supervised Fine-Tuning (DFT)

Apply a diffusion supervised fine-tuning (DFT) phase. Zyphra fine-tuned on a curated set of instruction-response pairs. The goal is to align the diffusion model with downstream tasks like chat, summarization, or code generation. Since the diffusion inference is compute-bound, SFT can be performed with standard next-token prediction loss (or a masked variant) without hurting speed.

Step 7: Evaluate Performance and Speedup

Finally, measure the model’s quality and speed. Zyphra reported no systematic loss of evaluation performance compared to the original autoregressive model. On AMD hardware, they observed up to 7.7x speedup due to better GPU utilization. Benchmark your own model on tasks like MMLU, HellaSwag, or custom use cases. Profile inference time, memory bandwidth usage, and compute efficiency to confirm the improvement.

Tips for Success

- Don’t train from scratch – Zyphra emphasizes that diffusion offers only inference-time benefits. Training is already compute-bound, so reuse your existing pretraining infrastructure.

- Match hardware to the conversion – The speedup is most pronounced on AMD GPUs because of their high compute-to-memory-bandwidth ratio. Test on your target hardware.

- Keep the diffusion step count minimal – Zyphra’s model uses a single transformation per token (not iterative denoising). Experiment with 1–2 denoising steps to balance quality and speed.

- Monitor KV-cache reuse – Block-level generation should share the cache across the block. Verify that your implementation avoids reloading per token.

- Use the TiDAR paper as a reference – For detailed mathematical and implementation details, consult the original TiDAR publication alongside Zyphra’s blog post.

Related Articles

- Inside Docker's Fleet: How Autonomous AI Agents Accelerate Development

- Revolutionary Hook System Unifies Memory Across Leading AI Coding Assistants, Eliminating Vendor Lock-In

- TokenSpeed: A New Open-Source LLM Inference Engine Tailored for Agentic AI Workloads

- 8 Key Insights from Meta's Billion-Dollar Graviton Deal: The New Face of AI Infrastructure

- 7 Surprising Ways Anthropic's Natural Language Autoencoders Reveal Claude's Hidden Thoughts

- 10 Revolutionary Features of ContextTree: The Visual LLM Canvas That Ends Context Chaos

- AWS Introduces Desktop App for Amazon Quick and New Agentic AI Solutions for Amazon Connect

- Decoding Complex Interactions in Large Language Models: A Scalable Approach